Insight

Designing for Autonomy, Part 2: Digital Twins as a Medium for Reflexivity

This is part 2 of a two part post around designing for autonomy. Read part 1 here.

How can Digital Twins help us better understand the mind, wellbeing, and self-development?

For its fall 2021 program, Digital Society School tasked a team of multidisciplinary researchers and designers (of which I am a part of) with the challenge of creating a Digital Twin of HvA students from the physical education (ALO) and Elite Student Athlete programs. The aim of the project is to keep track of their wellbeing, mental health, and self-development skills. The guiding idea behind this challenge is that an individualized profile of the students’ brain would grant them and the school insights to better manage and fulfill their career expectations and reduce drop-out rates.

This admittedly tricky challenge provided us with the framework to hold a series of theoretical conversations not only around the assumptions of how a Digital Twin of brain or of mental processes might work, but also concerning the very nature and purpose of Digital Twin technologies, as well as our understanding of the mind, wellbeing, and self-development.

In this post, I expand on themes concerning value oriented design that came up during our discussion with Jelger Kroese (read part 1 here), designer, creative technologist, and ambassador of Digital Society School’s Digital Twin track. During our exchange we deliberated on how to translate philosophical and psychological concepts like autonomy and emotional wellbeing into design practices.

The goal of this post is to further expand on these concepts and their practical design implications in order to come up with design guidelines reframing the notion of Digital Twins (especially when concerning human actors) as a medium to facilitate a reflexive understanding of ourselves, our wellbeing, and that of our communities.

Challenging the concept of Digital Twins

Currently a buzzword within tech-centered settings, Digital Twin technologies are regularly described in technical discourses as real-time, dynamic digital representations of physical entities that allow to understand, predict, and optimize performance [1]. ‘Digital Twin’ is nevertheless a vague term, mostly used in industrial and manufacturing contexts, with no universally agreed upon definition, and hardly distinguishable from a real-time and composite version of more generic-sounding and less buzzy terms such as ‘data visualization’ [2].

Even if the term has recently gained traction, the idea behind a Digital Twin is not novel. Artificial representations with traits or abilities that differ, enhance, or even surpass their organic originals is a crucial element of animistic thinking or attitudes that assign agency or foretelling qualities to inanimate objects (from zombies and living dolls to crystal balls, tarot cards, cursed paintings, and religious iconic idolatry) [3]. The expectations placed on digital representations don’t fundamentally differ from these. Nevertheless, their calculation and predictive powers spawn from their ability to process and formalize the world as large amounts of information in short time spans. Magical abilities are thus replaced by seemingly rational and formalized ones, yet, the expectations remain.

‘Nooscope’: beyond copying the mind

The very idea that representations can have an epistemic value, a transcendent quality that lies beyond its material composition, is fundamental for the undertakings of modern science [4]. In the same way that a map or a graph grant their users mediated or abstracted access to a previously unseen facet of the world, Digital Twin technologies aspire to be a tool to, quoting Matteo Pasquinelli, “see and navigate the space of knowledge” [5]. That is, Digital Twins, more than being a copy of pre-existing physical entities, are a ‘Nooscope’ (from the Greek skopein ‘to examine, look’ and noos ‘knowledge’) [6], an instrument presenting the world as space where knowledge and sense (relations, patterns, predictions not seen with the naked eye) occur, and not only as a site for objects to rest indifferent from each other.

Mirrors and linear perspective are tools that enable artificial representations creating/revealing unseen aspects of the world and ourselves. Left: Paolo Veronese, Vernus of the Mirror (c. 1585). Right: Hans Vredeman de Vries, Perspectival Subject (1604).

The mind is an epistemologically elusive object: continually entangled with other notions such as the biological brain or the theological soul. It is difficult to even claim that the mind is a definable and stable entity; this is due to the fact that the mind is thought of as the condition for determining objects (i.e. the subject) but not itself an object. It is the interface through which the world is experienced, but not necessarily a part of this world. A Digital Twin of the mind, this nooscope, would be a tool enabling this backflip: the mind reflexively constituting itself as one of its objects and, thus, pulling itself down back to the world. That is, as an entity that can be described and made predictions about, but also something to be objectively and externally experienced. Therefore, the way in which this twin is framed and designed will provide the scaffolding to approach the mind. This means that any twin would already presuppose a theory of the object it observes, thus reshaping it according to its interpretative model.

A Digital Twin of the mind, this nooscope, would be a tool enabling this backflip: the mind reflexively constituting itself as one of its objects and, thus, pulling itself down back to the world.

Cybernetics and behaviorism: the mind as information feedback loops

In as much as this twin is digital, the mind must be coded in advance as an object interpretable by digital technologies. This means that the mind should be constituted as information, or, more precisely, as an informational feedback loop. The notion of information as we know it today is greatly indebted with Norbert Wiener’s Cybernetic project [7]. Cybernetics conflated several scientific fields to develop a theory of information as input and output loops in order to build predictive models for military use. To do so, cybernetics formalized the much older philosophical idea of recursivity and/or reflexivity (a property historically ascribed to the mind) into a conceptual abstraction [8]. This opened the door to interpreting every phenomenon as a looping informational system capable of adjusting future conduct based on past performance [9].

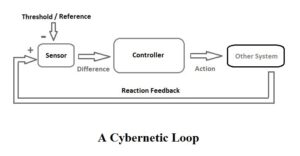

The core concept of cybernetics is circular causality or feedback. Observed outcomes are taken as inputs for further action in ways that support the maintenance of particular conditions. Schematization of a cybernetic loop by Wikipedia user Baango (18 may 2015)

Analogously, behavioral psychology attempted to describe and predict human behavior, not by analyzing the inherent structure of an organism, but by making inferences from its past behavior (data). Psychology would be then, the study of the acquisition and deployment of habits through stimulus-response associations [10]. In both cases, cybernetics and behavioral psychology, there is an implied metaphysics (and political stance) for which information and the mind are self-regulating processes tending towards order and stability; where any waste of resources is marked as a deviation to be corrected.

Reflexivity and openness to promote autonomy

There is no denying that mental health and emotional wellbeing are correlated with biological processes in the brain. However, team discussions lead us to stray away from theories predicated on linear input-output analysis and opted for an approach constituting the mind as an open-ended, relational, and recursive process of interaction between the self, the other, and itself-as-an-other. Or, in the words of philosopher of technology, Yuk Hui, to conceive the mind as:

“the capacity of coming back to itself in order to know itself and determine itself. Every time it departs from itself, it actualizes its own reflection in traces, which we call memory. […] Every reflective movement leaves a trace like a road mark, every trace presents a questioning, to which the answer can be addressed only by the movement in its totality.” [11]

Sketches of forms of recursion as featured in Yuk Hui’s book Recursivity and Contingency (2019). Notice that the loop never circles back to the same point. Each circulation brings forward new states (ascends to different levels) even when performing the same looping movement. Image from “Cybernetics for the Twenty-First Century: An Interview with Philosopher Yuk Hui” by Geert Lovink in e-flux Journal.

This is a conception of the mind close to that of cybernetics’ feedback loops. Nevertheless, even if cybernetics and behaviorism might provide us with a theoretical framework, we want to distance ourselves from their goals. Where cybernetics was a method to reduce deviation in favor of control and prediction, we seek to foster self-development and autonomy. Where cybernetics viewed the world as immaterial informational processes, we want to use digital technologies to materialize the mind as shared and context specific embodied experiences.

This was a conscious choice in order to distance ourselves from the notion of Digital Twins as the profiling of individuals through data collection, since it deals with human agency and wellbeing as fundamentally determined by past actions (data). Furthermore, it understands groups as the sum of individuals, thus lacking any acknowledgement of collective values. Data can be a useful source to understand our patterns and tendencies. However, data collection does not only identify existing behaviors but actively creates new ones. Individual profiling promotes behaviors such as the cultivation of the self, not to critically engage with it and understand its relationship with its environment, but to generate more data to feed the system. Therefore, a Digital Twin that promotes autonomy and self-development should also reconsider the role of data collection as something more than the sole perpetuation of the feedback loop. Purposeful data collection practices would not focus on the gathering itself, but on the actions and experiences they promote to the people they are deployed on.

From Brain Profile to Nervous System

We first pitched this project as a communication tool rather than a profile of the brain as we considered that the purpose of this profile was to better connect coaches, mentors, and teachers with students. This implies creating channels of communication — bringing forward human relations that were not previously there. Therefore, this idea slowly evolved into a way to represent these newly opened channels. Which, in turn, lead to the broader idea of this tool being an open-ended feedback system that senses and synthesizes how a community, rather than an individual, is feeling. This would be a system or, more specifically, an environment that lets its users gather, see, and hopefully feel a collective mood that would not be perceivable without the mediation of technology.

But then again, our track is called Digital Twins, a tool that is normally thought of as an in-real-time copy to better manage pre-existing physical entities. This pitch does not introduce a copy (a twin), but a lens to perceive and express something that wasn’t previously noticed. What we would like to present is, perhaps, a profile of the school, not as a dashboard that summarizes the school as place or a collection of separate individuals (data points), but one that shows, modifies, and brings new layers of meaning imperceivable to the naked eye: the connections between the individuals that make up the school.

From contemporary pop science to XIXth century pseudoscience, the compartmentalizing, classifying, and profiling of the brain has been used to promote specific social relations rooted in the desire of (self)control through individualization. Left: Screenshot from the article “Reconcile your Right and Left Brain to Become a Better Entrepreneur” on Forbes.com (4 March 2017). Right: A definition of phrenology with chart from Webster’s Academic Dictionary (c. 1895).

Therefore, instead of using the metaphor of a “brain profile”, we went for that of a “nervous system”. Ideally, an artificial nervous system would add a new layer of human interactions and activities. Its purpose would be to actively reframe existing ones by recursively sensing, representing, understanding, and bringing new meanings to them. This system could be thought of as a socio-technical device that would empower us to develop new senses through technology, allowing us to “preserve and renew our relations with other beings and the world itself” [12].

This is a very ambitious idea as well as a very ambitious way to present it, but it can be materialized by coming up with the right interactions that allow the school to re-metaphor itself. At the end of the day, we want to use technology to bring forward these spaces, moments, and interactions of recursivity or self-reflection. Digital spaces where “thinking about thinking to change our thinking”[13] takes place. Through these, the students and the school itself would ponder on what they are, what they want to be, and how they can foster their own autonomy, both, individually and in relation to a community they care about. This strikes us as a powerful idea, as we currently have an abundance of methods to represent and enhance the mind as an individual self, but few to provide the technological means for its realization outside its natural habitat of the brain. In other terms, tools that make visible and articulate the relations of the mind with its surrounding environment and community, providing us with new ways to experience ourselves and each other.

Design guidelines for Digital Twins to foster autonomy:

- Beyond copies: Digital Twins as an added layer of meaning to the physical world (nooscope).

- Developing new senses: Digital Twins can work as lenses for perceiving/interpreting data, channels of communication, or conduits for coordination.

- Focus on contextuality: How does the twin relate to the people and place it is deployed on? What existing spaces, moments, and interactions is the twin modifying? What new ones is it enabling?

- From profiling individuals to developing agency: Digital Twins to challenge feedback loops, promote difference, recognize subjective experience, and promote novel ways to experience the self.

- From collection of past data to generation of reflexive data: Digital Twins as a medium that enables, acknowledges, and self-actualizes techno-social systems, environments and relations.

- From disembodied information to embodied material relationships: Digital Twins to analyze and comprehend shared emotions, common values, and institutions, rather than to individualize people as sets of information.

Written by Jordi Viader Guerrero: I am a practice-based researcher on philosophy of technology, internet culture, and new media. My work is chiefly focused on articulating digital culture within wider cultural, political, and epistemic logics. By conceiving technology as a tool of production, destruction, and expression of identities, I am drawn to issues raised by our inescapably “technological nature”. Most recently, I attempted to use TikTok’s scrolling UI to formulate a general theory of scrolling itself. At DSS, I am currently creating digital doppelgangers to allow us to experience and understand our emotions in new ways.

Read the interview here.

See our project page here.

References

[1] Melesse, Tsega Y., Valentina Di Pasquale, and Riemma Stefano. “Digital Twin models in industrial operations: State-of-the-art and future research directions.” IET Collaborative Intelligent Manufacturing 3, no. 1 (March 2021): 37–47.

[2] For a list of several common, yet non-fundamental, traits of Digital Twin technologies see our main project page and: Korenhof, Paulan, Vincent Blok, and Sanneke Kloppenburg. “Steering Representations — Towards a Critical Understanding of Digital Twins.” Philosophy & Technology 34 (October 2021): 1751–1773.

[3] Teixeira Pinto, Ana. “The Pigeon in the Machine: The Concept of Control in Behaviorism and Cybernetics.” In Alleys of Your Mind: Augmented Intelligence and its Traumas, by Matteo Pasquinelli (Ed.), 23–34. Lüneburg: Meson press, 2015.

[4] Mitchell, W. J. T. “What Do Pictures Want.” In What Do Pictures Want: The Lives and Loves of Images, by W. J. T. Mitchell, 28–56. Chicago: University of Chicago Press, 2005.

[5] Pasquinelli, Matteo, and Vladan Joler. “The Nooscope Manifested: Artificial Intelligence as Instrument of Knowledge Extractivism”. KIM HfG Karlsruhe and Share Lab. Karlsruhe , May 1, 2020. https://nooscope.ai/.

[6] Ibid.

[7] The original formulation of the cybernetic project can be found in: Weiner, Norbert. The Human Use of Human Beings: Cybernetics and Society. Cambridge, MA: Da Capo Press, 1988.

[8] Lovink, Geert and Hui, Yuk. “On Recursivity and Contingency: Interview with Yuk Hui by Geert Lovink.” Institute of Network Cultures. September 5, 2019. https://networkcultures.org/geert/2019/09/05/on-recursivity-and-contingency-interview-with-yuk-hui-by-geert-lovink/.

[9] Teixera, “The Pigeon in the Machine: The Concept of Control in Behaviorism and Cybernetics”, 29.

[10] This is John B. Watson’s account on psychology as an objective science based on Ivan Pavlov’s study of conditioned reflexes. See: Teixera, “The Pigeon in the Machine: The Concept of Control in Behaviorism and Cybernetics”, 26.

[11] Lovink and Hui. “On Recursivity and Contingency: Interview with Yuk Hui by Geert Lovink.”

[12] Hui, Yuk. Art and Cosmotechnics. Chicago: University of Minnesota Press (e-flux series), 2020.

[13] This simplified but catchy definition of self-recursivity is taken from: Woodard, Ben. “Loops of Augmentation: Bootstrapping, Time Travel, and Consequent Futures.” In Alleys of Your Mind: Augmented Intellligence and Its Traumas, by Matteo Pasquinelli (Ed.), 158. Lüneburg: meson press, 2015.